On June 29, 2015 at the ISTE Conference in Philadelphia, Audrey Watters spoke on a panel called “Is it Time to Give Up on Computers in Schools?”. The transcript of her speech can be found here. Below is the longer speech she prepared for the occasion, which she offered to Hybrid Pedagogy to publish.

Last year, Gary Stager joked that we should submit a proposal to ISTE for a panel titled “Is It Time to Give Up on Computers in Schools?” No surprise, it was rejected. But this year, he submitted again, and the very same proposal was accepted.

So here we are today, making the case for why this whole education technology thing has gone alarmingly off the rails and it’s time to scrap the entire effort.

ISTE is, of course, the perfect place to deliver this talk.

ISTE is the perfect place to question what the hell we’re doing in ed-tech in part because this has become a conference and an organization dominated by exhibitors. Ed-tech — in product and policy — is similarly dominated by brands. 60% of ISTE’s revenue comes from the conference exhibitors and corporate relations; touting itself as a membership organization, just 12% of its revenue comes from members. Take one step into that massive shit-show called the Expo Hall and it’s hard not to agree: “Yes, it is time to give up on computers in schools.”

A little history: The International Council for Computers in Education was founded in 1979, and a decade later it merged with the International Association for Computing Education to form ISTE, the International Society for Technology in Education. While that new organization erased the words “computer” and “computing” from its new title, these were all entities — and most importantly, educators, in their early days at least — at the forefront of a push to use “microcomputers” — not just mainframes — in education.

Many of the earliest members — those who’ve attended the conference for decades now, back when it was still called NECC (the National Educational Computing Conference) — were still hard-pressed to make the arguments back in their schools and districts that computers could be educational in the face of a system that was skeptical of these expensive machines and that had yet to recognize personal computers’ ability to enhance the bureaucracy of schooling and the efficiency of standardized testing.

But as Seymour Papert noted in The Children’s Machine,

Little by little the subversive features of the computer were eroded away: Instead of cutting across and so challenging the very idea of subject boundaries, the computer now defined a new subject; instead of changing the emphasis from impersonal curriculum to excited live exploration by students, the computer was now used to reinforce School’s ways. What had started as a subversive instrument of change was neutralized by the system and converted into an instrument of consolidation.

I’m going to say something sacrilegious here. (I’m going to say a bunch of things that are sacrilegious — I mean, this is an ISTE panel on getting computers out of the classroom.) But I think Seymour was naive. All of us, really. I think that we were blinded in the early days of personal computing about the power and possibility of computers. I think we were similarly blinded in the early days of the World Wide Web.

Sure, there are subversive features of the computer; but I think the computer’s features also involve neoliberalism, late stage capitalism, imperialism, libertarianism, and environmental destruction. They now involve high stakes investment by the global 1% — it’s going to be a $60 billion market by 2018, we’re told. Computers involve the systematic de-funding and dismantling of a public school system and a devaluation of human labor. They involve the consolidation of corporate and governmental power. They are designed by white men for white men. They involve scientific management. They involve widespread surveillance and, for many students, a more efficient school-to-prison pipeline — let’s name this for what it is: despite our talk about meritocracy, it’s a racially segregated, class-based system of education, one that technology as easily entrenches as subverts.

And so I think it’s time now to recognize that if we want education that’s more just and more equitable and more sustainable, that we need to get the ideologies that are hardwired into computers out of the classroom. “We become blind to the ideological meaning of our technologies,” Neil Postman once cautioned. We gaze glassy-eyed at the new features in the latest hardware and software — it’s always about the latest app, and yet we know there’s nothing new there; instead we must stare critically at the belief systems that are embedded in these tools.

In the early days of educational computing, it was often up to the individual, innovative teacher to put a personal computer in their classroom. Sometimes they paid for the computer out of their own pocket. These were days of experimentation, and as Seymour teaches us, re-imagining what these powerful machines could enable students to do. (That’s why the computer matters, Seymour argued — something you could tinker and think with. Not this other word that ISTE now invokes, “technology.”)

And then came the network.

You’ll often hear the Internet hailed as one of the greatest inventions of mankind — something that connects us all and that has, thanks to the World Wide Web, enabled the publishing and sharing of ideas at an unprecedented pace and scale. The Internet and the Web are supposed to be decentralized — “small pieces loosely joined.” Perhaps.

What “the network” introduced in educational technology was also a more centralized control of computers. No longer was it up to the individual, innovative teacher to have a computer in her classroom. It was up to the district, the Central Office. It was up to IT. The sorts of hardware and software that were purchased had to meet those needs — the needs and the desire of the administration, not the needs and the desires of innovative educators, and certainly not the needs and desires of students.

The mainframe never went away. And now, virtualized, we call it “the cloud.”

Computers and mainframes and networks are a point of control. Computers are a tool of surveillance. Databases and data are how we are disciplined and punished. Quite to the contrary of Seymour’s hopes that computers will liberate learners, this will be how all of us will increasingly be monitored and managed.

If we look at the history of computers, we shouldn’t be that surprised. The computers’ origins are as weapons of war: Alan Turing, Bletchley Park, code-breakers, and cryptography. IBM in Germany and its development of machines and databases that it sold to the Nazis in order to efficiently collect the identity and whereabouts of Jews.

The latter should give us pause as we tout programs and policies that collect massive amounts of data — “big data.” The algorithms that computers facilitate drive more and more of our lives. We live in what law professor Frank Pasquale calls “the black box society.” We are tracked by technology; we are tracked by companies; we are tracked by our employers; we are tracked by the government, and “we have no clear idea of just how far much of this information can travel, how it is used, or its consequences.”

Our access to information is constrained by these algorithms. Our choices, our students’ choices are constrained by these algorithms — and we do not even recognize it, let alone challenge it.

Plenty of educators here hail Google as a benevolent force, for example. (It’s not. It’s a corporation beholden to its shareholders.) Educators readily brand themselves as Google certified teachers. They have their students work with Google’s various tools, with nary a concern about the implications. There’s little thought about the Terms of Service, the privacy policy, the data mining. There’s little recognition that Google is, according to its revenue at least, an advertising company. It is also a massive system of data collection and analysis. As privacy researcher Chris Soghoian quipped on Twitter, Google has built the greatest global surveillance system. It’s no surprise that the NSA has sought to to use it too.

And the stakes are high here not simply because the NSA and Google are both watching to see what we click on. The stakes are high here not simply because Google now makes military drones and military robots. The stakes are high here not simply because Google has expanded beyond search and cloud-based software into Internet-connected devices in our homes.

The stakes are high here in part because all this highlights Google’s thirst for data — our data. The stakes are high here because we have convinced ourselves that we can trust Google with its mission: “To organize the world’s information and make it universally accessible and useful.”

Google is at the heart of two things that computer-using educators should care deeply and think critically about: the collection of massive amounts of our personal data and the control over our access to knowledge.

Neither of these are neutral. Again, these are driven by ideology and by algorithms.

Promoters of education technology describe this as “personalization.” More data collection and analysis will mean that the software bends to the student. To the contrary, as Seymour pointed out long ago, instead we find the computer programming the child. If we do not unpack the ideology, if the algorithms are all black-boxed, then “personalization” will be discriminatory. As Tressie McMillan Cottom has argued “a ‘personalized’ platform can never be democratizing when the platform operates in a society defined by inequalities.”

If we want schools to be democratizing, then we need to stop and consider how computers are likely to entrench the very opposite. Unless we stop them.

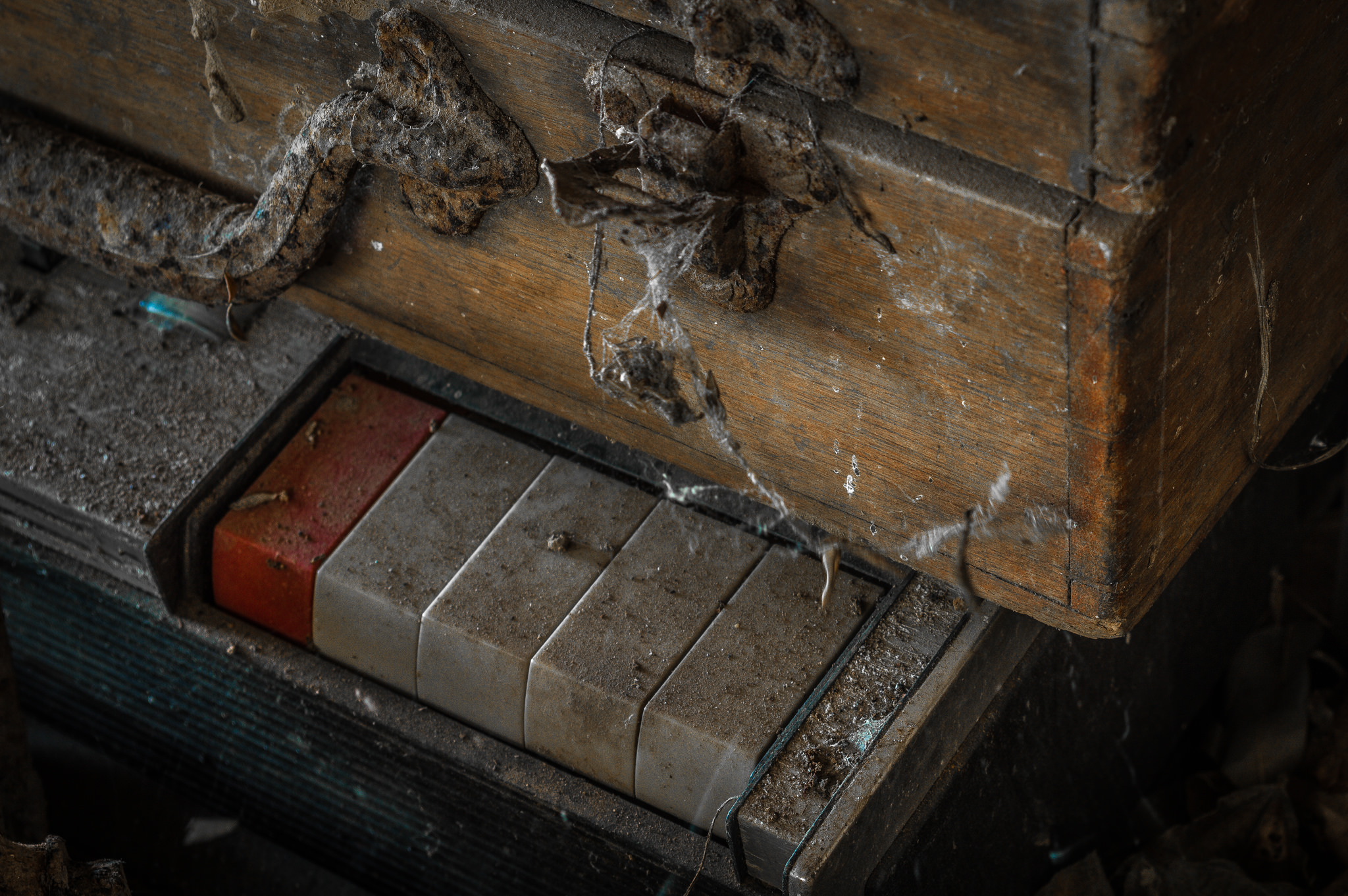

In the 1960s, the punchcard became a symbol of our dehumanization by computers and by a system — an educational system — that was inflexible, impersonal. We were being reduced to numbers. We were becoming alienated. These new machines were increasing the efficiency of a system that was setting us up for a life of drudgery and that were sending us off to war. We could not be trusted with our data or with our freedoms or with the machines themselves, we were told. The punchcards cautioned: “Do not fold, spindle, or mutilate.”

Students pushed back.

Let me quote here from Mario Savio, speaking on the stairs of Sproul Hall in 1964 — over fifty years ago, yes, but I think still one of the most relevant messages for us as we consider the state and the ideology of education technology:

We’re human beings!

There is a time when the operation of the machine becomes so odious, makes you so sick at heart, that you can’t take part; you can’t even passively take part, and you’ve got to put your bodies upon the gears and upon the wheels, upon the levers, upon all the apparatus, and you’ve got to make it stop. And you’ve got to indicate to the people who run it, to the people who own it, that unless you’re free, the machine will be prevented from working at all!

We’ve upgraded since then from punchcards to iPads. But underneath, the ideology — a reduction of humans to 1s and 0s, programmed and not programmable — remains. And we need to stop this ed-tech machine.